124 KiB

| title |

|---|

| Set up build environments |

Virtual Box

To build a cross platform application, you need to build in a cross platform environment.

Setting up Ubuntu in Virtual Box

Having a whole lot of different versions of different machines, with a whole lot of snapshots, can suck up a remarkable amount of disk space mighty fast. Even if your virtual disk is quite small, your snapshots wind up eating a huge amount of space, so you really need some capacious disk drives. And you are not going to be able to back up all this enormous stuff, so you have to document how to recreate it.

Each snapshot that you intend to keep around long term needs to correspond to a documented path from install to that snapshot.

When creating a Virtual Box machine, make sure to set the network adapter to paravirtualization, set preferences in the file menu, the virtual hard disk, and the snapshot directory to the desired location. Virtual hard disk location selection is done when creating it, snapshot directory is done in settings/general/advanced (which also allow you to do clipboard sharing).

apt-get -qy update && apt-get -qy upgrade

# Fetches the list of available updates and

# Strictly upgrades the current packages

To install guest additions, thus allow full communication between host and virtual machine, update Ubuntu, hen while Ubuntu is running, simulate placing the guest additions CD in the simulated optical drive. Then Ubuntu will correctly activate and run the guest additions install.

Installing guest additions frequently runs into trouble. Debian especially tends to have security in place to stop random people from sticking in CDs that get root access to the OS to run code to amend the OS in ways the developers did not anticipate.

Setting up Debian in Virtual Box

To install guest additions on Debian:

su -l root

apt-get -qy update && apt-get -qy install build-essential module-assistant git dialog rsync && m-a -qi prepare

apt-get -qy upgrade

mount -t iso9660 /dev/sr0 /media/cdrom

cd /media/cdrom0 && sh ./VBoxLinuxAdditions.run

usermod -a -G vboxsf cherry

You will need to do another m-a prepare and to reinstall it after a

apt-get -qy dist-upgrade. Sometimes you need to do this after a mere

upgrade to Debian or to Guest Additions. Every now and then, guest

additions gets mysteriously broken on Debian, due to automatic operating

system updates in the background, the system will not shut

down correctly, and guest additions has to be reinstalled with a

shutdown -r. Or copy and paste mysteriously stops working.

On Debian lightdm mate go to system/ control center/ Look and Feel/ Screensaver and turn off the screensaver screen lock

Go to go to system / control center/ Hardware/ Power Management and turn off the computer and screen sleep.

To set automatic login on lightdm-mate

nano /etc/lightdm/lightdm.conf

In the [Seat:*] section of the configuration file (there is another section of this configuration file where these changes have no apparent effect) edit

#autologin-guest=false

#autologin-user=user

#autologin-user-timeout=0

to

autologin-guest=false

autologin-user=cherry

autologin-user-timeout=0

In the shared directory, I have a copy of /etc and ~.ssh ready to roll, so I just go into the shared directory copy them over, chmod .ssh and reboot.

On the source machine

scp -r .ssh «destination»:~

scp -r etc «destination»:/

On the destination machine

chmod 700 .ssh && chmod 600 .ssh/*

I cannot do it all from within the destination machine, because linux cannot follow windows symbolic links.

Set the hostname

check the hostname and dns domain name with

hostname && domainname -s && hostnamectl status

And if need be, set them with

domainname -b reaction.la

hostnamectl set-hostname reaction.la

Your /etc/hosts file should contain

127.0.0.1 localhost

127.0.0.1 reaction.la

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

ff02::3 ip6-allhosts

To change the host ssh key, so that different hosts have different hostnames after I copied everything to a new instance:

cd /etc/ssh

cat sshd* |grep HostKey

#Make sure that `/etc/ssh/sshd_config` has the line

# HostKey /etc/ssh/ssh_host_ed25519_key

rm -v ssh_host*

ssh-keygen -t ed25519 -f /etc/ssh/ssh_host_ed25519_key

Note that visual studio remote compile requires an ecdsa-sha2-nistp256 key on the host machine that it is remote compiling for. If it is nist, it is

backdoored

If the host has a domain name, the default in /etc/bash.bashrc will not display it in full at the prompt, which can lead to you being confused about which host on the internet you are commanding.

nano /etc/bash.bashrc

Change the lower case h in PS1='${debian_chroot:+($debian_chroot)}\u@\h:\w\$ ' to an upper case H

PS1='${debian_chroot:+($debian_chroot)}\u@\H:\w\$ '

And, similarly, in two places in etc/skel/.bashrc Also

cp -rv ~/.ssh /etc/skel

Actual server

Setting up an actual server is similar to setting up the virtual machine modelling it, except you have to worry about the server getting overloaded and locking up.

If a server is configured with an ample swap file an overloaded server will lock up and have to be ungracefully powered down, which can corrupt the data on the server. If the swap file is inadequate, the OOM killer will shut down processes, which is also very bad, but does not risk losing data. So by default, servers tend to be out of the box configured with a grossly inadequate swap file, so that they will fail gracefully under overload, rather than locking up, needing to be powered down, and then needing to be recreated from scratch because of data corruption.

This looks to me like a kernel defect. The kernel should detect when it is

thrashing the swap file, and respond by sleeping entire processes for

lengthy and growing periods, and logging these abnormally long sleeps

on wake. Swapping should never escalate to lockup, and if it does, bad

memory management design, though this misfeature seems common to

most operating systems.

I prefer an ample swap file, larger than total memory, plus thrash protect,

which will result in comparatively graceful degradation, plus the existence of

the file /tmp/thrash-protect-frozen-pid-list will tell you that your

overloaded server is degrading (if it is not degrading, the file exists only briefly).

{target="_blank"}

{target="_blank"}

VM pretending to be cloud server

To have it look like a cloud server, but one you can easily snapshot and restore, set it up in bridged mode. Note the Mac address. After having it is running as a normal system, and you can browse the web with it, after guest additions and all that, then shut it down, go to your router, and give it a new static IP and a new entry in hosts.

Then configure ssh access to root. so that you can go ssh <server>as

if on your real cloud system. See setting up a server in the cloud

On a system that only I have physical access to and which runs no services that can be accessed from outside my local network my username is always the same and the password always a short easily guessed single word. Obviously if your system is accessible to the outside world, you need a strong password. An easy password could be potentially really bad if we have openssh-server installed, and ssh can be accessed from outside. If building a headless machine with openssh-server (the typical cloud or remote system) then need to set up public key sign in only, if the machine should contain anything valuable. Passwords are just not good enough – you want your private ssh key on a machine that only you have physical access to, and runs no services that anyone on the internet has access to, and which you don’t use for anything that might get it infected with malware, and you use that private key to access more exposed machines by ssh public key corresponding to that private key.

apt-get -qy update && apt-get -qy upgrade

# Fetches the list of available updates and

# strictly upgrades the current packages

To automatically start virtual boxes on bootup, which we will need to do if publishing them, Open VirtualBox and right click on the VM you want to autostart, click the option to create a shortcut on the desktop, cut the shortcut. Open the windows 10“Run” box (Win+R) and enter shell:startup Paste the shortcut. But all this is far too much work if we are not publishing them.

If a virtual machine is always running, make sure that the close default is to save state, for otherwise shutdown might take too long, and windows might kill it when updating.

If we have a gui, don’t do openssh. Terminal comes up with Ctrl Alt T

Directory Structure

Linux

/usr- Secondary hierarchy for read-only user data; contains the majority of (multi-)user utilities and applications.

/usr/bin- Non-essential command binaries (not needed in single user mode); for all users.

/usr/include- Standard include files grouped in subdirectories, for example

/usr/include/boost/usr/lib- Libraries for the binaries in /usr/bin and /usr/sbin.

/usr/lib<qual>- Alternate format libraries, e.g. /usr/lib32 for 32-bit libraries on a 64-bit machine (option)

/usr/local- Tertiary hierarchy for local data, specific to this host. Typically has further subdirectories, e.g., bin, lib, share.

/usr/sbin- Non-essential system binaries, e.g., daemons for various network-services. Blockchain daemon goes here.

/usr/share- Architecture-independent (shared) data. Blockchain goes in a subdirectory here.

/usr/src- Source code. Generally release versions of source code. Source code that the particular user is actively working on goes in the particular user’s

~/src/directory, not this directory.~/.<program>- Data maintained by and for specific programs for the particular user, for example in unix

~/.Bitcoinis the equivalent of%APPDATA%\Bitcoinin Windows.~/.config/<program>- Config data maintained by and for specific programs for the particular user, so that the users home directory does not get cluttered with a hundred

.<program>directories.~/.local/<program>- Files maintained by and for specific programs for the particular user.

~/src/- Source code that you, the particular user, are actively working on, the equivalent of

%HOMEPATH%\src\in Windows.

~/src/include- header files, so that they can be referenced in your source code by the expected header path, thus for example this directory will contain, by copying or hard linking, the

boostdirectory so that standard boost includes work.

Setting up a headless server in the cloud

Setting up ssh

Login by password is second class, and there are a bunch of esoteric special cases where it does not quite 100% work in all situations, because stuff wants to auto log you in without asking for input.

Putty is the windows ssh client, but you can use the Linux ssh client in

windows in the git bash shell, and the Linux remote file copy utility

scp is way better than the putty utility PSFTP.

Usually a command line interface is a pain and error prone, with a multitude of mysterious and inexplicable options and parameters, and one typo or out of order command causing your system to unrecoverably die,but even though Putty has a windowed interface, the command line interface of bash is easier to use.

It is easier in practice to use the bash (or, on Windows, git-bash) to manage keys than PuTTYgen. You generate a key pair with

ssh-keygen -t ed25519 -f keyfile

(I don't trust the other key algorithms, because I suspect the NSA has been up to cleverness with the details of the implementation.)

On windows, your secret key should be in %HOMEPATH%/.ssh, on linux

in /home/cherry/.ssh, as is your config file for your ssh client, listing

the keys for hosts. The public keys of your authorized keys are in

/home/cherry/.ssh/authorized_keys, enabling you to login from afar as

that user over the internet. The linux system for remote login is a cleaner

and simpler system that the multitude of mysterious, complicated, and

failure prone facilities for remote windows login, which is a major reason

why everyone is using linux hosts in the cloud.

In Debian, I create the directory ~/.ssh for the user, and, using the

editor nano, the file authorized_keys

mkdir ~/.ssh

nano ~/.ssh/authorized_keys

chmod 700 .ssh

chmod 600 .ssh/*

I set the ssh session host IP under /Session, the auto login username under /Connection/data, the autologin private key under /Connection/ssh/Auth.

If I need KeepAlive I set that under /Connection

I make sure auto login works, which enables me to make ssh do all sorts of

things, then I disable ssh password login, restrict the root login to only be

permitted via ssh keys.

In order to do this, open up the SSHD config file (which is ssh daemon config, not ssh_config. If you edit this into the the ssh_config file everything goes to hell in a handbasket. ssh_config is the global .ssh/config file):

nano /etc/ssh/sshd_config

Your config file should have in it

HostKey /etc/ssh/ssh_host_ed25519_key

X11Forwarding yes

AllowAgentForwarding yes

AllowTcpForwarding yes

TCPKeepAlive yes

AllowStreamLocalForwarding yes

GatewayPorts yes

PermitTunnel yes

PasswordAuthentication no

ChallengeResponseAuthentication no

UsePAM no

PermitRootLogin prohibit-password

ciphers chacha20-poly1305@openssh.com

macs hmac-sha2-256-etm@openssh.com

kexalgorithms curve25519-sha256

pubkeyacceptedkeytypes ssh-ed25519

hostkeyalgorithms ssh-ed25519

hostbasedacceptedkeytypes ssh-ed25519

casignaturealgorithms ssh-ed25519

PermitRootLogin defaults to prohibit-password, but best to set it

explicitly Within that file, find the line that includes

PermitRootLogin and if enabled modify it to ensure that users can only

connect with their ssh key.

ssh out of the box by default allows every cryptographic algorithm under the sun, but we know the NSA has been industriously backdooring cryptographic code, sometimes at the level of the algorithm itself, as with their infamous elliptic curves, but more commonly at the level of implementation and api, ensuring that secure algorithms are used in a way that is insecure against someone who has the backdoor, insecurely implementing secure algorithms. On the basis of circumstantial evidence

and social connections, I believe that much of the cryptographic code used

by ssh has been backdoored by the nsa, and that this is a widely shared

secret.

They structure the api so as to make it overwhelmingly likely that the code will be used insecurely, and subtly tricky to use securely, and then make sure that it is used insecurely. It is usually not that the core algorithms are backdoored, as that the backdoor is on a more human level, gently steering the people using core algorithms into a hidden trap.

The backdoors are generally in the interfaces between layers, the apis, which are subtly mismatched, and if you point at the backdoor they say "that is not a backdoor, the code is fine, that issue is out of scope. File a bug report against someone else's code. Out of scope, out of scope."

And if you were to file a bug report against someone else's code, they would tell you they are using this very secure NSA approved algorithm with the approved and very secure api, the details of the cryptography are someone else's problem, "out of scope, out of scope", and they have absolutely no idea what you are talking about, because what you are talking about is indeed very obscure, subtle, complicated, and difficult to understand. The backdoors are usually where one api maintained by one group is using a subtly flawed api maintained by another group.

The more algorithms permitted, the more places for backdoors. The certificate algorithms are particularly egregious. Why should we ever allow more than one algorithm, the one we most trust?

Therefore, I restrict the allowed algorithms to those that I actually use, and only use the ones I have reason to believe are good and securely implemented. Hence the lines:

HostKey /etc/ssh/ssh_host_ed25519_key

ciphers chacha20-poly1305@openssh.com

macs hmac-sha2-256-etm@openssh.com

kexalgorithms curve25519-sha256

pubkeyacceptedkeytypes ssh-ed25519

hostkeyalgorithms ssh-ed25519

hostbasedacceptedkeytypes ssh-ed25519

casignaturealgorithms ssh-ed25519

Not all ssh servers recognize all these configuration options, and if you

give an unrecognized configuration option, the server dies, and then you

cannot ssh in to fix it. But they all recognize the first three, HostKey, ciphers, macs which are the three that matter the most.

To put these changes into effect:

shutdown -r now

Now that putty can do a non interactive login, you can use plink to have a

script in a client window execute a program on the server, and echo the

output to the client, and psftp to transfer files, though scp in the Git Bash

window is better, and rsync (Unix to Unix only, requires rsync running on

both computers) is the best. scp and rsync, like git, get their keys from

~/.ssh/config

On windows, FileZilla uses putty private keys to do scp. This is a much more user friendly and safer interface than using scp – it is harder to issue a catastrophic command, but rsync is more broadly capable.

Life is simpler if you run FileZilla under linux, whereupon it uses the same keys and config as everyone else.

All in all, on windows, it is handier to interact with Linux machines

using the Git Bash command window, than using putty, once you have set

up ~/.ssh/config on windows.

Of course windows machines are insecure, and it is safer to have your

keys and your ~/.ssh/config on Linux.

Putty on Windows is not bad when you figure out how to use it, but ssh

in Git Bash shell is better:

You paste stuff into the terminal window with right click, drag stuff

out of the terminal window with the mouse, you use nano to edit stuff in

the ssh terminal window.

Once your you can ssh into your cloud server without a password, you now need to update it and secure it with ufw. You also need rsync, to move files around

Remote graphical access over ssh

ssh -cX root@reaction.la

c stands for compression, and X for X11.

-X overrides the per host setting in ~/.ssh/config:

ForwardX11 yes

ForwardX11Trusted yes

Which overrides the host * setting in ~/.ssh/config, which overrides the settings for all users in /etc/ssh/ssh_config

If ForwardX11 is set to yes, as it should be, you do not need the X. Running a gui app over ssh just works. There is a collection of useless toy

apps, x11-apps for test and demonstration purposes.

I never got this working in windows, because no end of mystery configuration issues, but it works fine on Linux.

Then, as root on the remote machine, you issue a command to start up the graphical program, which runs as an X11 client on the remote machine, as a client of the X11 server on your local machine. This is a whole lot easier than setting up VNC.

If your machine is running inside an OracleVM, and you issue the

command startx as root on the remote machine to start the remote

machines desktop in the X11 server on your local OracleVM, it instead

seems to start up the desktop in the OracleVM X11 server on your

OracleVM host machine. Whatever, I am confused, but the OracleVM

X11 server on Windows just works for me, and the Windows X11 server

just does not. On Linux, just works.

Everyone uses VNC rather than SSH, but configuring login and security

on VNC is a nightmare. The only usable way to do it is to use turn off all

security on VNC, use ufw to shut off outside access to the VNC host's port

and access the VNC host through SSH port forwarding.

X11 results in a vast amount of unnecessary round tripping, with the result that things get unusable when you are separated from the other compute by a significant ping time. VNC has less of a ping problem.

X11 is a superior solution if your ping time is a few milliseconds or less.

VNC is a superior solution if your ping time is humanly perceptible, fifty milliseconds or more. In between, it depends.

I find no solution satisfactory. Graphic software really is not designed to be used remotely. Javascript apps are. If you have a program or functionality intended for remote use, the gui for that capability has to be javascript/css/html. Or you design a local client or master that accesses and displays global host or slave information.

The best solution if you must use graphic software remotely and have a significant ping time is to use VNC over SSH. Albeit VNC always exports an entire desktop, while X11 exports a window. Though really, the best solution is to not use graphic software remotely, except for apps.

Install minimum standard software on the cloud server

apt-get -qy update && apt-get -qy install build-essential module-assistant dialog rsync ufw

cat /etc/default/ufw | sed 's/^\#*[[:blank:]]*MANAGE_BUILTINS[[:blank:]]*=.*$/MANAGE_BUILTINS=yes/g' >tempufw

mv tempufw /etc/default/ufw

chmod 600 /etc/default/ufw

ufw status verbose

ufw disable

ufw default deny incoming && ufw default allow outgoing

ufw allow ssh && ufw limit ssh/tcp

echo "Y

" |ufw enable && ufw status verbose

Backing up a cloud server

rsync is the openssh utility to synchronize directories locally and

remotely.

Assume rsync is installed on both machines, and you have root logon

access by openssh to the remote_host

Shutdown any daemons that might cause a disk write during backup, which would be disastrous. Login as root at both ends or else files cannot be accessed at one end, nor permissions preserved at the other.

rsync -aAXvzP --delete remote_host:/ --exclude={"/dev/*","/proc/*","/sys/*","/tmp/*","/run/*","/media/*","/lost+found"} local_backup

Of course, being root at both ends enables you to easily cause catastrophe at both ends with a single typo in rsync.

To simply logon with ssh

ssh remote_host

To synchronize just one directory.

rsync -aAXvzP --delete remote_host:~/name .

To make sure the files are truly identical:

rsync -aAXvzc --delete reaction.la:~/name .

rsync, ssh, git and so forth know how to logon from the

~/.ssh/config(not to be confused with /etc/ssh/sshd_config or

/etc/ssh/ssh_config

Host remote_host

HostName remote_host

Port 22

IdentityFile ~/.ssh/id_ed25519

User root

ServerAliveInterval 60

TCPKeepAlive yes

Git on windows users %HOMEPATH/.ssh/config and that is how it knows

what key to use

To locally do a backup of the entire machine, excluding of course your

/local_backup directory which would cause an infinite loop:

rsync -raAvX --delete /

--exclude={"/dev/*","/proc/*","/sys/*","/tmp/*","/run/*","/local_backup/*",/

"/media/*","/lost+found"} /local_backup

The a and X options means copy the exact file structure with permission and all that recursively, The z option is for compression of data in motion. The data is uncompressed at the destination, so when backing up local data locally, we don’t use it.

To locally just copy stuff from the Linux file system to the windows file system

rsync -acv --del source dest/

Which will result in the directory structure dest/source

To merge two directories which might both have updates:

rsync -acv source dest/

A common error and source of confusion is that:

rsync -a dir1/ dir2

means make dir2 contain the same contents as dir1, while

rsync -a dir1 dir2

is going to put a copy of dir1 inside dir2

Since a copy can potentially take a very long time, you need the -v flag.

The -P flag (which probably should be used with the -c flag) does incremental backups, just updating stuff that has been changed. The -z flag does compression, which is good if your destination is far away.

Apache

To bring up apache virtual hosting

Apache2 html files are at /var/www/<domain_name>/.

Apache’s virtual hosts are:

/etc/apache2/sites-available

/etc/apache2/sites-enabled

The apache2 directory looks like:

apache2.conf

conf-available

conf-enabled

envvars

magic

mods-available

mods-enabled

ports.conf

sites-available

sites-enabled

The sites-available directory looks like

000-default.conf

reaction.la.conf

default-ssl.conf

The sites enabled directory looks like

000-default.conf -> ../sites-available/000-default.conf

reaction.la-le-ssl.conf

reaction.la.conf

And the contents of reaction.la.conf are (before the https thingly has worked its magic)

<VirtualHost *:80>

ServerName reaction.la

ServerAlias www.reaction.la

ServerAlias «foo.reaction.la»

ServerAlias «bar.reaction.la»

ServerAdmin «me@mysite»

DocumentRoot /var/www/reaction.la

<Directory /var/www/reaction.la>

Options -Indexes +FollowSymLinks

AllowOverride All

</Directory>

ErrorLog ${APACHE_LOG_DIR}/reaction.la-error.log

CustomLog ${APACHE_LOG_DIR}/reaction.la-access.log combined

RewriteEngine on

RewriteCond %{HTTP_HOST} ^www\.reaction.la\.com [NC]

RewriteRule ^(.*)$ https://reaction.la/$1 [L,R=301]

</VirtualHost>

All the other files don’t matter. The conf file gets you to the named server. The contents of /var/www/reaction.la are the html files, the important one being index.html.

To get free, automatically installed and configured, ssl certificates and configuration

apt-get -qy install certbot python-certbot-apache

certbot register --register-unsafely-without-email --agree-tos

certbot --apache

if you have set up http virtual apache hosts for every name supported by your nameservers, and only those names, certbot automagically converts these from http virtual hosts to https virtual hosts and sets up redirect from http to https.

If you have an alias server such as www.reaction.la for reaction.la, certbot will guess you also have the domain name www.reaction.la and get a certificate for that.

Thus, after certbot has worked its magic, your conf file looks like

<VirtualHost *:80>

ServerName reaction.la

ServerAlias foo.reaction.la

ServerAlias bar.reaction.la

ServerAdmin me@mysite

DocumentRoot /var/www/reaction.la

<Directory /var/www/reaction.la>

Options -Indexes +FollowSymLinks

AllowOverride All

</Directory>

ErrorLog ${APACHE_LOG_DIR}/reaction.la-error.log

CustomLog ${APACHE_LOG_DIR}/reaction.la-access.log combined

RewriteEngine on

RewriteCond %{HTTP_HOST} ^www\.example\.com [NC]

RewriteRule ^(.*)$ https://reaction.la/$1 [L,R=301]

RewriteCond %{SERVER_NAME} =reaction.la [OR]

RewriteRule ^ https://%{SERVER_NAME}%{REQUEST_URI} [END,NE,R=permanent]

</VirtualHost>

Lemp stack on Debian

apt-get -qy update && apt-get -qy install nginx mariadb-server php php-cli php-xml php-mbstring php-mysql php7.3-fpm

nginx -t

ufw status verbose

Browse to your server, and check that nginx web page is working. Your browser will probably give you an error page, merely because it defaults to https, and https is not yet working. Make sure you are testing http, not https. We will get https working shortly..

Mariadb and ufw

ufw default deny incoming && ufw default allow outgoing

ufw allow ssh && ufw allow 'Nginx Full' && ufw limit ssh/tcp

# edit /etc/default/ufw so that MANAGE_BUILTINS=yes

cat /etc/default/ufw | sed 's/^\#*[[:blank:]]*MANAGE_BUILTINS[[:blank:]]*=.*$/MANAGE_BUILTINS=yes/g' >tempufw

mv tempufw /etc/default/ufw

# "no" is bug compatibility with software long obsolete

ufw enable && ufw status verbose

# Status: active

# Logging: on (low)

# Default: deny (incoming), allow (outgoing), disabled (routed)

# New profiles: skip

# To Action From

# -- ------ ----

# 22/tcp (SSH) ALLOW IN Anywhere

# 80,443/tcp (Nginx Full) ALLOW IN Anywhere

# 22/tcp LIMIT IN Anywhere

# 22/tcp (SSH (v6)) ALLOW IN Anywhere (v6)

# 80,443/tcp (Nginx Full (v6)) ALLOW IN Anywhere (v6)

# 22/tcp (v6) LIMIT IN Anywhere (v6)

mysql_secure_installation

#empty root password

#Don't set a root password

#remove anonymous users

#disallow remote login

#drop test database

mariadb

You should now receive a message that you are in the mariadb console

CREATE DATABASE example_database;

GRANT ALL ON example_database.* TO 'example_user'@'localhost'

IDENTIFIED BY 'mypassword' WITH GRANT OPTION;

FLUSH PRIVILEGES;

exit

mariadb -u example_user --password=mypassword example_database

CREATE TABLE todo_list ( item_id INT

AUTO_INCREMENT, content VARCHAR(255),

PRIMARY KEY(item_id) );

INSERT INTO todo_list (content) VALUES

("My first important item");

INSERT INTO todo_list (content) VALUES

("My second important item");

SELECT * FROM todo_list;

exit

OK, MariaDB is working. We will use this trivial database and easily

guessed example_user with the easily guessed password

mypassword for more testing later. Delete him and his database

when your site has your actual content on it.

domain names and PHP under nginx

Check again that the default nginx web page comes up when you browse to the server.

Create the directories /var/www/blog.reaction.la and /var/www/reaction.la and put some html files in them, substituting your actual domains for the example domains.

mkdir /var/www/reaction.la && nano /var/www/reaction.la/index.html

mkdir /var/www/blog.reaction.la && nano /var/www/blog.reaction.la/index.html

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8" />

</head>

<body><h1>reaction.la index file</h1></body>

</html>

Delete the default in /etc/nginx/sites-enabled, and create a file, which I

arbitrarily name config that specifies how your domain names are to be

handled, and how php is to be executed for each domain names.

This config file assumes your domain is called reaction.la and your

service is called php7.3-fpm.service. Create the following config file,

substituting your actual domains for the example domains, and your actual

php fpm service for the fpm service.

nginx -t

# find the name of your php fpm service

systemctl status php* | grep fpm.service

# substitute the actual php fpm service for

# php7.3-fpm.sock in the configuration file.

systemctl stop nginx

rm -v /etc/nginx/sites-enabled/*

nano /etc/nginx/sites-enabled/config

server {

return 301 $scheme://reaction.la$request_uri;

}

server {

listen 80;

listen [::]:80;

index index.php index.html;

server_name blog.reaction.la;

root /var/www/blog.reaction.la;

index index.php index.html;

location / {

try_files $uri $uri/ =404;

}

location ~ \.php$ {

include snippets/fastcgi-php.conf;

fastcgi_pass unix:/run/php/php7.3-fpm.sock;

}

location = /favicon.ico {access_log off; }

location = /robots.txt {access_log off; allow all; }

location ~* \.(css|gif|ico|jpeg|jpg|js|png)$ {

expires max;

}

}

server {

listen 80;

listen [::]:80;

index index.php index.html;

server_name reaction.la;

root /var/www/reaction.la;

location / {

try_files $uri $uri/ =404;

}

location ~ \.php$ {

include snippets/fastcgi-php.conf;

fastcgi_pass unix:/run/php/php7.3-fpm.sock;

}

location = /favicon.ico {access_log off; }

location = /robots.txt {access_log off; allow all; }

location ~* \.(css|gif|ico|jpeg|jpg|js|png)$ {

expires max;

}

}

server {

server_name *.blog.reaction.la;

return 301 $scheme://blog.reaction.la$request_uri;

}

The first server is the default if no domain is recognized, and redirects the

request to an actual server, the next two servers are the actual domains

served, and the last server redirects to the second domain name if the

domain name looks a bit like the second domain name. Notice that this

eliminates those pesky wwws.

The root tells it where to find the actual files.

The first location tells nginx that if a file name is not found, give a 404 rather than doing the disastrously clever stuff that it is apt to do, and the second location tells it that if a file name ends in .php, pass it to php7.3-fpm.sock (you did substitute your actual php fpm service for php7.3-fpm.sock, right?)

Now check that your configuration is OK with nginx -t, and restart nginx to read your configuration.

nginx -t

systemctl restart nginx

Browse to those domains, and check that the web pages come up, and that www gets redirected.

Now we will create some php files in those directories to check that php works.

echo "<?php phpinfo(); ?>" |tee /var/www/reaction.la/info.php

Then take a look at info.php in a browser.

If that works, then create the file /var/www/reaction.la/index.php containing:

<?php

$user = "example_user";

$password = "mypassword";

$database = "example_database";

$table = "todo_list";

try {

$db = new PDO("mysql:host=localhost;dbname=$database", $user, $password);

echo "<h2>TODO</h2><ol>";

foreach($db->query("SELECT content FROM $table") as $row) {

echo "<li>" . $row['content'] . "</li>";

}

echo "</ol>";

}

catch (PDOException $e) {

print "Error!: " . $e->getMessage() . "<br/>";

die();

}

?>

Browse to http://reaction.la If that works, delete the info.php file as it reveals private information. You now have domain names being served

by lemp. Your database now is accessible over the internet through PHP

on those domain names.

SSL and DNSSEC

SSL encrypts communication between your server and the client, so that those in between cannot read it or change it.

It also somewhat protects against malicious people fooling the client into connecting to the wrong server. Unfortunately there are a thousand certificate authorities, and some of them are malicious or hostile, and if you have powerful enemies (and who cares about powerless enemies) they will cheerfully issue a certificate your enemy for your domain name. DNSSEC somewhat protects against this, since there is only one root of trust

If you are reading this document, you are self hosting, in which case your registrar is probably providing your nameservers, in which case it is easy for them to set up DNSSEC for you. You just have to click the correct button on their website. One click, and it is done. And now you only have to worry about two parties that might potentially defect on you, the DNSSEC and your registrar, instead of a thousand certificate authorities.

If, however, someone other than your registrar is managing your nameserver, if your DNS records live on a machine controlled by one entity, and your nameserver is controlled by a different entity, attempting to set up DNNSEC gets complicated, and if that someone is not you, considerably more complicated. In this case setting up DNSSEC is like setting up SSH, but when you are setting up SSH, you control both machines. When you attempt to setup DNSSEC you don't. Don't even try. If you do try, make very sure the nameserver is doing the right thing before you submit the DNSSEC public key you generated to the registrar and attempt to get the registrar to do the right thing.

OK, DNSSEC was easy. (Or you just gave up because far too hard.) Now on to SSL

Create the necessary DNS records, an A record pointing to your IP4 address, an AAAA record pointing to your IP6 address, a CAA record indicating who is the right issuer for your SSL certificate, so that not every certificate authority in the world is allowed to issue fake certificates for your enemies, and CNAME records for the www and git aliases.

The CAA record looks like:

@ CAA 0 issue "letsencrypt.org"

Go to whatsmydns and check if it looks right.

Certbot provides a very easy utility for installing ssl certificates, and if your domain name is already publicly pointing to your new host, and your new host is working as desired, without, however, ssl/https that is great.

# first make sure that your http only website is working as

# expected on your domain name and each subdomain.

# certbots many mysterious, confusing, and frequently

# changing behaviors expect a working environment.

apt-get -qy install certbot python-certbot-nginx

certbot register --register-unsafely-without-email --agree-tos

certbot --nginx

# This also, by default, sets up automatic renewal,

# and reconfigures everything to redirect to https

Not so great if you are setting up a new server, and want the old server to keep on servicing people while you set up the new server, so here is the hard way, where you prove that you, personally, control the DNS records, but do not prove that the server that certbot is modifying is right now publicly connected as that domain name.

(Obviously on your network the domain name should map to the new server. Meanwhile, for the rest of the world, the domain name continues to map to the old server, until the new server works.)

apt-get -qy install certbot python-certbot-nginx

certbot register --register-unsafely-without-email --agree-tos

certbot run -a manual --preferred-challenges dns -i nginx -d reaction.la -d blog.reaction.la

nginx -t

This does not set up automatic renewal. To get automatic renewal going,

you will need to renew with the webroot challenge rather than the manual

once DNS points to this server.

But if you are doing this, not on your test server, but on your live server, the easy way, which will also setup automatic renewal and configure your webserver to be https only, is:

`certbot --nginx -d mail.reaction.la,blog.reaction.la,reaction.la`

If instead you already have a certificate, because you copied over your /etc/letsencrypt directory

apt-get -qy install certbot python-certbot-nginx

certbot install -i nginx

nginx -t

To renew certbot certificates, which has to be done every couple of

months:

If you previously did the manual challenge, then certbot renew will likely

fail (because no default non manual challenge exists). You need to set the

renewal parameters for renewal to take place.

certbot renew --renew-by-default --http01

Because certbot automatically renews using the previous defaults, you

have to have previously used a process to obtain certificate suitable for

automation, which mean you have to have given it the information

(--webroot --webroot-path /var/www/reaction.la)

about how to do an automatic renewal by actually obtaining a certificate that way.

To backup and restore letsencrypt, to move your certificates from one

server to another, rsync -HAvaX reaction.la:/etc/letsencrypt /etc, as root

on the computer which will receive the backup. The letsencrypt directory

gets mangled by tar, scp and sftp.

Again, browse to your server. You should get redirected to https, and https should work.

Backup the directory tree /etc/letsencrypt/, or else you can get into

situations where renewal is a problem. Only Linux to Linux backups work,

and they do not exactly work – things go wrong. Certbot needs to fix its

backup and restore process, which is broken. Apparently you should

backup certain directories but not others. But backing up and restoring the

whole tree works well enough for certbot install -i nginx

The certbot modified file for your ssl enabled domain should now look like

server {

return 301 $scheme://reaction.la$request_uri;

}

server {

index index.php index.html;

server_name blog.reaction.la;

root /var/www/blog.reaction.la;

index index.php;

location / {

try_files $uri $uri/ =404;

}

location ~ \.php$ {

include snippets/fastcgi-php.conf;

fastcgi_pass unix:/run/php/php7.3-fpm.sock;

}

location = /favicon.ico {access_log off; }

location = /robots.txt {access_log off; allow all; }

location ~* \.(css|gif|ico|jpeg|jpg|js|png)$ {

expires max;

}

listen [::]:443 ssl; # managed by Certbot

listen 443 ssl; # managed by Certbot

ssl_certificate /etc/letsencrypt/live/reaction.la/fullchain.pem; # managed by Certbot

ssl_certificate_key /etc/letsencrypt/live/reaction.la/privkey.pem; # managed by Certbot

include /etc/letsencrypt/options-ssl-nginx.conf; # managed by Certbot

ssl_dhparam /etc/letsencrypt/ssl-dhparams.pem; # managed by Certbot

}

server {

index index.html;

server_name reaction.la;

root /var/www/reaction.la;

location / {

try_files $uri $uri/ =404;

}

location ~ \.php$ {

include snippets/fastcgi-php.conf;

fastcgi_pass unix:/run/php/php7.3-fpm.sock;

}

location = /favicon.ico {access_log off; }

location = /robots.txt {access_log off; allow all; }

location ~* \.(css|gif|ico|jpeg|jpg|js|png)$ {

expires max;

}

listen [::]:443 ssl ipv6only=on; # managed by Certbot

listen 443 ssl; # managed by Certbot

ssl_certificate /etc/letsencrypt/live/reaction.la/fullchain.pem; # managed by Certbot

ssl_certificate_key /etc/letsencrypt/live/reaction.la/privkey.pem; # managed by Certbot

include /etc/letsencrypt/options-ssl-nginx.conf; # managed by Certbot

ssl_dhparam /etc/letsencrypt/ssl-dhparams.pem; # managed by Certbot

}

server {

server_name *.blog.reaction.la;

return 301 $scheme://blog.reaction.la$request_uri;

}

server {

server_name *.reaction.la;

return 301 $scheme://reaction.la$request_uri;

}

server {

if ($host = reaction.la) {

return 301 https://$host$request_uri;

} # managed by Certbot

listen 80;

listen [::]:80;

server_name reaction.la;

return 404; # managed by Certbot

}

server {

if ($host = blog.reaction.la) {

return 301 https://$host$request_uri;

} # managed by Certbot

listen 80;

listen [::]:80;

server_name blog.reaction.la;

return 404; # managed by Certbot

}

You may need to clean a few things up after certbot is done.

The important lines that certbot created in the file being ssl_certificate,

the additional servers listening on port 80 which exist to redirect http to https

servers listening on port 403, and that all redirects should be https instead

of $scheme (fix them if they are not).

nginx starts as root, but runs as unprivileged user www-data, who needs to

have read permissions to every relevant directory. If you want to give php

write permissions to a directory, or restrict www-data and pgp’s read

permissions to some directories and not others, you could do clever stuff with

groups and users, giving creating users that php scripts act as, and

making www-data a member of their group, but that is complicated and

easy to get wrong.

A quick fix is to chown -R www-data:www-data the directories that your

web server needs to write to, and only those directories, though I can hear security gurus gritting their teeth when I say this.

For all the directories that www-data merely needs to read:

find /var/www -type d -exec chmod 755 {} \;

find /var/www -type f -exec chmod 644 {} \;

Now you should delete the example user and the example database:

mariadb

REVOKE ALL PRIVILEGES, GRANT OPTION FROM

'example_user'@'localhost';

DROP USER 'example_user'@'localhost';

DROP DATABASE example_database;

exit

Wordpress on Lemp

apt-get -qy install php-curl php-gd php-intl php-mbstring php-soap php-xml php-xmlrpc zip php-zip

systemctl status php* | grep fpm.service

# restart the service indicated above

systemctl stop nginx

systemctl stop php7.3-fpm.service

mariadb

CREATE DATABASE wordpress DEFAULT CHARACTER SET

utf8mb4 COLLATE utf8mb4_unicode_ci;

GRANT ALL ON wordpress.* TO 'wordpress_user'@'localhost'

IDENTIFIED BY 'FGikkdfj3878';

FLUSH PRIVILEGES;

exit

The lemp server block that will handle the wordpress domain needs to pass

urls to index.php instead of returning a 404. (Handle your 404s and

redirects issues with the Redirections Wordpress plugin, which is a whole

lot easier, safer, and more convenient than editing redirects into your

/etc/nginx/sites-enabled/* files.)

server {

. . .

location / {

#try_files $uri $uri/ =404;

try_files $uri $uri/ /index.php$is_args$args;

}

. . .

}

nginx -t

mkdir temp

cd temp

curl -LO https://wordpress.org/latest.tar.gz

tar -xzvf latest.tar.gz

cp -v wordpress/wp-config-sample.php wordpress/wp-config.php

cp -av wordpress/. /var/www/blog.reaction.la

chown -R www-data:www-data /var/www/blog.reaction.la && find /var/www -type d -exec chmod 755 {} \; && find /var/www -type f -exec chmod 644 {} \;

# so that wordpress can write to the directory

curl -s https://api.wordpress.org/secret-key/1.1/salt/

nano /var/www/blog.reaction.la/wp-config.php

Replace the defines that are there

define('LOGGED_IN_KEY', 'put your unique phrase here');

with the defines you just downloaded from wordpress.

and replace DB_NAME, DB_USER, DB_PASSWORD, and FS_METHOD

…

// ** Mariadb settings //

/** The name of the database for WordPress */

define('DB_NAME', 'wordpress');

/** MySQL database username */

define('DB_USER', 'wordpress_user');

/** MySQL database password */

define('DB_PASSWORD', 'FGikkdfj3878');

/** MySQL hostname */

define( 'DB_HOST', 'localhost' );

/** Database Charset to use in creating database tables. */

define( 'DB_CHARSET', 'utf8mb4' );

/** The Database Collate type. */

define( 'DB_COLLATE', 'utf8mb4_unicode_ci' );

…

systemctl start php7.3-fpm.service

systemctl start nginx

It should now be possible to navigate to your wordpress domain in your web browser and finish the setup there:

Exporting databases

Interacting directly with your database of the MariaDB command line is apt to lead to disaster.

Installing PhpMyAdmin has a little gotcha on Debian 9, which is covered in this tutorial, but I just do not use PhpMyAdmin even though it is easer and safer.

To export by command line

systemctl stop nginx

systemctl stop php7.3-fpm.service

mdir temp && cd temp

fn=blogdb

db=wordpress

dbuser=wordpress_user

dbpass=FGikkdfj3878

mysqldump -u $dbuser --password=$dbpass $db > $fn.sql

head -n 30 $fn.sql

zip $fn.sql.zip $fn.sql

systemctl start php7.3-fpm.service

systemctl start nginx

Moving a wordpress blog to new lemp server

Prerequisite: you have configured Wordpress on Lemp

Copy everything from the web server source directory of the previous wordpress installation to the web server of the new wordpress installation.

chown -R www-data:www-data /var/www/blog.reaction.la

Replace the defines for DB_NAME, DB_USER, and DB_PASSWORD in wp_config.php, as described in Wordpress on Lemp

To import datbase by command line

systemctl stop nginx

systemctl stop php7.3-fpm.service

# we don’t want anyone browsing the blog while we are setting it up

# nor the wordpress update service running.

mariadb

DROP DATABASE IF EXISTS wordpress;

CREATE DATABASE wordpress DEFAULT CHARACTER SET

utf8mb4 COLLATE utf8mb4_unicode_ci;

GRANT ALL ON wordpress.* TO 'wordpress_user'@'localhost'

IDENTIFIED BY 'FGikkdfj3878';

exit

At this point, the database is still empty, so if you start nginx and browse to the blog, you will get the wordpress five minute install, as in Wordpress on Lemp. Don’t do that, or if you start nginx and do that to make sure everything is working, then start over by deleting and recreating the database as above.

Now we will populate the database.

fn=wordpress

db=wordpress

dbuser=wordpress_user

dbpass=FGikkdfj3878

unzip $fn.sql.zip

mv *.sql $fn.sql

mariadb -u $dbuser --password=$dbpass $db < $fn.sql

mariadb -u $dbuser --password=$dbpass $db

SHOW TABLES;

SELECT COUNT(*) FROM wp_posts;

SELECT * FROM wp_posts l LIMIT 20;

exit

Adjust $table_prefix = 'wp_'; in wp_config.php if necessary.

systemctl start php7.3-fpm.service

systemctl start nginx

Inside the sql file may be references to the old directories, (search for

'recently_edited'), and to the old user who had the privilege to create views

(search for DEFINER=) Replace them with the new directories and new

database user, in this example wordpress_user.

Edit the siteurl,admin_email and new_admin_email fields of the blog

database to the domain and new admin email.

mariadb -u $dbuser --password=$dbpass $db < $db.sql

mariadb -u $dbuser --password=$dbpass $db

SHOW TABLES;

SELECT COUNT(*) FROM wp_comments;

SELECT * FROM wp_comments l LIMIT 10;

Adjust $table_prefix = 'wp_'; in wp_config.php if necessary.

systemctl start php7.3-fpm.service

systemctl start nginx

Your blog should now work.

Logging and awstats.

Logging

First create, in the standard and expected location, a place for nginx to log stuff.

mkdir /var/log/nginx

chown -R www-data:www-data /var/log/nginx

Then edit the virtual servers to be logged, which are in the directory /etc/nginx/sites-enabled and in this example in the file /etc/nginx/sites-enabled/config

server {

server_name reaction.la;

root /var/www/reaction.la;

…

access_log /var/log/nginx/reaction.la.access.log;

error_log /var/log/nginx/reaction.la.error.log;

…

}

The default log file format logs the ips, which in a server located in the cloud might be a problem. People who do not have your best interests at heart might get them.

So you might want a custom format that does not log the remote address. On the other hand, Awstats is not going to be happy with that format. A compromise is to create a cron job that cuts the logs daily, a cron job that runs Awstats, and a cron job that then deletes the cut log when Awstats is done with it.

There is no point to leaving a gigantic pile of data, that could hang you and your friends, sitting around wasting space.

Postfix and Dovecot

Postfix and Dovecot are a pile of matchsticks and glue from which you are expected to assemble a boat.

Probably I should be using one of those email setup packages that set up everything for you. Mailinabox seems to be primarily tested and developed on ubuntu, and is explicitly not supported on debian.

Mailcow however, is Debian. But Mailcow wants 6GiB of ram, plus one GiB swap, plus twenty GiB disk. Ouch. Mailinabox can get by with one GiB of ram, plus one GiB of swap. Says 512MiB is OK, though two GiB of ram is strongly recommended.

Mailinabox wants the domain name box.yourdomain.com, and, after it is

set up, wants the nameservers ns1.box.yourdomain.com and

ns2.box.yourdomain.com. They, fortunately, have a namecheap tutorial.

{target="_blank"}

{target="_blank"}

{target="_blank"}

Linuxbabe, on the other hand, recommends iRedMail But iRedMail needs 4GiB and gets far less love from users than Mailinabox.

{target="_blank"}

Postfix is a matter of installing it, but it expects an MX record in your nameserver, and quite a few other records are almost essential.

Postfix automagically does the right thing for users that have accounts, using their names and passwords to set up mailboxes. It gets complicated only when people start to pile supposedly more advanced mail systems, databases, and webmail on top of it.

With Postfix alone, you can receive emails at your server and have them automatically forwarded to a more useful email address. You can also receive, send, and reply to emails when logged in to you server using the command line utility, which I only use to make sure Postfix is working before I configure Dovecot on top of it.

To receive your emails in an actually useful form, you are going to have to forward them or set up a Dovecot service. Dovecot provides Pop3 and IMAP. Postfix does not provide any of that.

Postfix is not a Pop3 or IMAP server. It sends, receives, and forwards emails. You cannot set up an email client such as Thunderbird to remotely access your emails – they are only available to people logged in on the server who are never going to look at them anyway, because there is no useful UI to read them and reply to them.

So you have to configure three or more independent things, each of which has an endlessly complicated configuration that is intimately, arcanely, and obscurely connected to the configuration of each of the other things.

Setting DNS entries for email

An MX record for reaction.la will read simply mail (no full stop, that

is for the case that you are trying to have a totally unrelated host handle

your mail) Check that it is working by using an MX lookup service such

as MX tools and Dig

You will need of course a corresponding CNAME record mail.

You are going to need a PTR record, or else you mail is likely to be rejected as spam.

The problem is that reverse DNS is not going the query the man who keeps your DNS records, but the man who gave you your IP address.

The UI for creating a PTR record is going to be in a different place, very likely maintained by a different man, using different software, than the UI for creating you MX record. It will have a link that is probably called something like "reverse" rather than PTR or DNS.

You look up your IP in MX tools or Dig

You must create the reverse DNS zone on the authoritative DNS nameserver for the IP address of your server. Which is controlled by whoever gave you your IP address, not whoever is maintaining your DNS records. You can find if your reverse IP is working by doing a reverse lookup in Dig.

Thus the instructions for setting up a PTR record that you find on the internet are unlikely to be applicable to you, and will only confuse you.

An MX record is useless, because apt to be massively spammed, without

a spf TXT record stating that mail only comes from your IP4 and IP6.

Otherwise your enemies will issue spam in your name, with the result that

your MX record will be blacklisted.

An spf record is of type TXT and looks like

v=spf1 ip4:69.64.153.131 ip6:DEAD:BEEF:DEAD:55a:0:0:0:1 -all

indicating that all mail under this domain

name will be sent from one and only one network address.

A DKIM record publishes a digital signature for sent mail, to prevent mail from being modified or fake mails being injected as it goes through the multiple intermediate servers.

By the time the ultimate recipient sees the email, no end of intermediate computers have had their hands on it, but pgp signing is obviously superior, since that is controlled by the actual sender, not one more intermediary. DKIM is the not quite good enough being the enemy of the good enough.

Worse, DKIM means that any email sent from your server is signed by your server, so if you send a private message, and someone defects on you by making it public, hard to claim that he is making it up. Sometimes you want your emails signed with a signature verifiable by third parties, and sometimes this is potentially dangerous. Gpg allows you to sign some things and not other things. DKIM means that everything gets signed, without you being aware of it.

DKIM renders all messages non repudiable, and some messages vitally need to be repudiable.

Gpg is better than DKIM, but has the enormous disadvantage that it cannot authenticate except by signing. If you send a message to a single recipient or a quite small number of recipients, you usually want him to know for sure it is from you, and has not been altered in transit, but not be able to prove to the whole world that it is from you.

A DMARK record can tell the recipient that mail from

rhocoin.org will always and only come senders like

user@rhocoin.org. This can be an inconvenient restriction on

one's ability to use a more relevant identity.

Further, intermediate servers keep manging messages sent through them, breaking the DKIM signatures, resulting in no end of spurious error messages

You want to stop other people's email servers from misbehaving on the sender addresses. You don't want to stop your server from misbehaving.

Trouble with SPF and DKIM is that, without DMARK, they have no impact on the sender address, thus don't stop spearphishing attacks using your identity. They do stop spam attacks from getting your server blacklisted by using your server identity to send junk mail.

SPF is sufficient to stop your server from getting blacklisted, and largely irrelevant to preventing spearphishing. But SPF with DMARK at least does something about spearphishing, while DKIM with DMARK does a far more thorough job on spearphishing, but produces a lot of false warnings due to intermediate servers mangling the email in ways that invalidate the signature.

The only useful thing that DMARK can do is ensure address alignment with SPF and DKIM, so that only people who can perform an email login to your server can send email as someone who has such a login.

But DKIM is complicated to install, and a lot more complicated to manage because you will be endlessly struggling to resolve the problem of signatures being falsely invalidated. It may be far more effective against spearphishing if the end user pays attention, but if people keep seeing false warnings, they are being trained to ignore valid warnings.

A solution to all these problems is to use the value ed25519-sha256 for a= in the DKIM header

(which ensures that obsolete intermediaries will

ignore your DKIM, thus third parties will see fewer false warnings) and to

have a cron job that regularly rotates your DKIM keys, and publishes the

old secret key on the DNS 36 hours after it has been rotated out under the

n=OldSecret_... field of the DKIM record, thus rendering your emails

deniable. (RSA keys are inconveniently large for this protocol, since they

do not fit in a DNS record)

But that is quite bit of work, so I just have not gotten around to it. No one seems to have gotten around to it. Needs to be part of Mailinabox so that it becomes a standard.

Until you can install DKIM with a cron job that renders email repudiable, do not install DKIM. (And disable it if Mailinabox enables it.])

Install Postfix

Here we will install postfix on debian so that it can be used to send emails by local users and applications only - that is, those installed on the same server as Postfix, such as your blog, mailutils, and Gitea, and can receive emails to those local users.

echo MAIL=Maildir>/etc/profile.d/maildir.sh && chmod +x /etc/profile.d/maildir.sh

Start up a new shell, so that $MAIL is correctly set

echo $MAIL

apt -qy update

apt -qy install mailutils

apt -qy install postfix

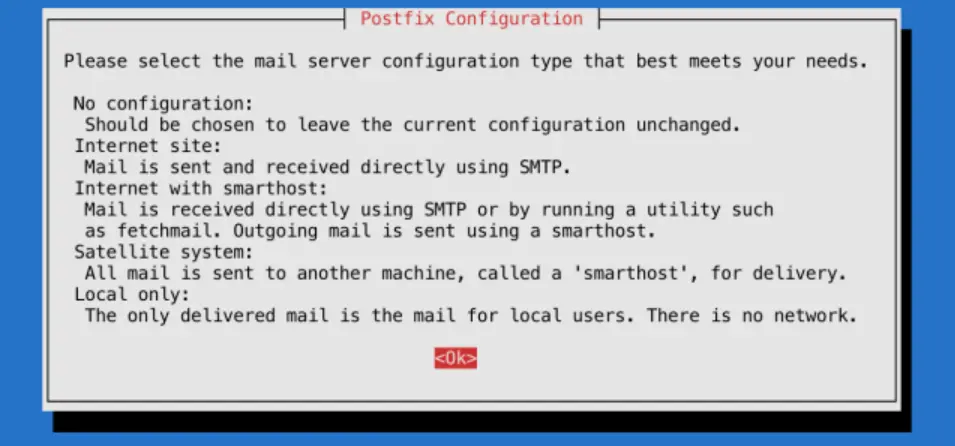

Near the end of the installation process, you will be presented with a window that looks like the one in the image below:

{width=100%}

If

{width=100%}

If <Ok> is not highlighted, hit tab.

Press ENTER to continue.

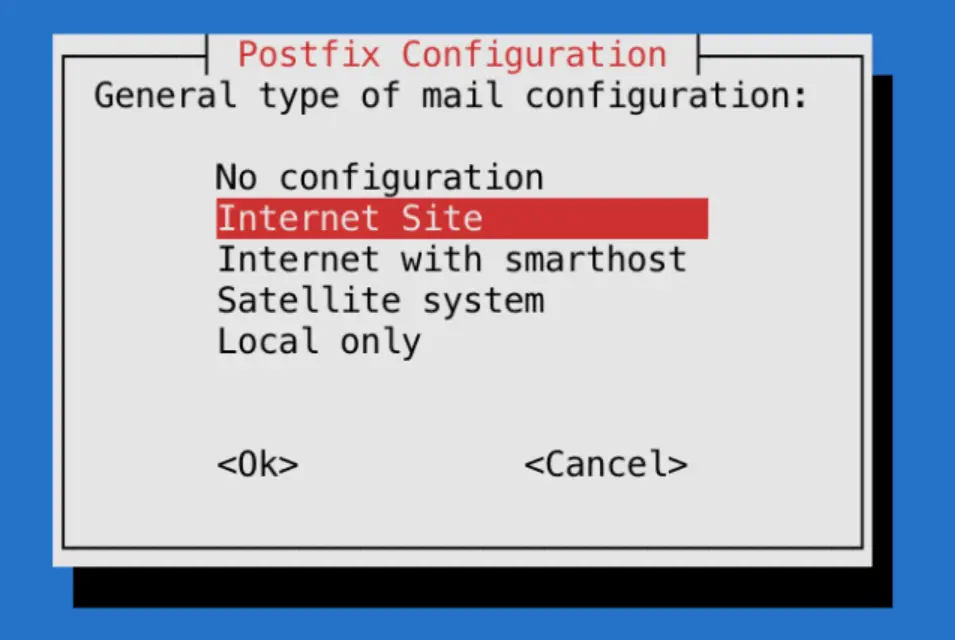

The default option is Internet Site, which is preselected on the following screen:

{width=100%}

Press

{width=100%}

Press ENTER to continue.

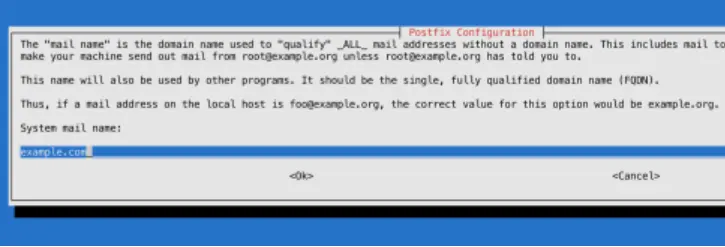

After that, you’ll get another window to set the domain name of the site that is sending the email:

{width=100%}

The

{width=100%}

The System mail name should be the same as the name you assigned to the server when you were creating it. When you’ve finished, press TAB, then ENTER.

You now have Postfix installed and are ready to modify its configuration settings.

configuring postfix

postconf -e home_mailbox=Maildir/

Which is incompatible with lots of modern mail software, but a lot more compatible with all manner of programs trying to use Postfix, including Dovecot

Your forwarding file is, by default, broken. It forwards all administrative

system generated email to the nonroot local user deb10 who probably does

not exist on your system.

Set up forwarding, so you’ll get emails sent to root on the system at your

personal, external email address or to a suitable nonroot local user, or

create the local user deb10.

To configure Postfix so that system-generated emails will be sent to your

email address or to some other non root local user, you need to edit the

/etc/aliases file.

nano /etc/aliases

mailer-daemon: postmaster

postmaster: root

nobody: root

hostmaster: root

usenet: root

news: root

webmaster: root

www: root

ftp: root

abuse: root

noc: root

security: root

root: «your_email_address»

After changing /etc/aliases you must issue the command newaliases to inform the mail system. (Rebooting does not do it.)

/etc/aliases remaps mail to users on your internal mail server, but likely your mail server is also the MX host for another domain. For this, you are going to need a rather more powerful tool, which I address later.

The postmaster: root setting ensures that system-generated emails are sent

to the root user. You want to edit these settings so these emails are rerouted

to your email address. To accomplish that, replace «your_email_address»

with your actual email address, or the name of a non root user.. Most systems do not allow email clients to

login as root, so you cannot easily access emails that wind up as root@mail.rhocoin.org

Probably you should create a user postmaster

If you’re hosting multiple domains on a single server, the other domains

must passed to Postfix using the mydestination directive if other people ware going tosend email addressed to users on those domains. But

chances are you also have other domains on another server, which declare in

their DNS this server as their MX record. mydestination is not the place

for the domain names of those servers, and putting them in mydestination

is apt to result in mysterious failures.

Those other domains, not hosted on this physical machine, but whose MX record points to this machine are virtual_alias_domains and postfix has to handle messages addressed to such users differently

Set the mailbox limit to an appropriate fraction of your total available disk space, and the attachment limit to an appropriate fraction of your mailbox size limit.

Check that myhostname is consistent with reverse ip search. (It should already be if you setup reverse IP in advance)

Set mydestination to all dns names that map to your server (it probably already does)

postconf -e mailbox_size_limit=268435456

postconf -e message_size_limit=67108864

postconf

postconf myhostname

postconf mydestination

postconf smtpd_banner

# you don't want your enemies to know what OS version you are running,

# as this may make hacking easier

postconf -e smtpd_banner='$myhostname ESMTP $mail_name'

postconf -e smtpd_helo_required=yes

postconf smtpd_helo_restrictions

postconf -e smtpd_helo_restrictions='permit_mynetworks, permit_sasl_authenticated, reject_invalid_helo_hostname, reject_non_fqdn_helo_hostname, reject_unknown_helo_hostname'

postconf smtpd_sender_restrictions

postconf -e smtpd_sender_restrictions='permit_mynetworks, permit_sasl_authenticated, reject_unknown_sender_domain'

postconf smtpd_client_restrictions

postconf -e smtpd_client_restrictions=='permit_mynetworks, permit_sasl_authenticated, reject_unknown_reverse_client_hostname'

newaliases && systemctl restart postfix

# check that you have in fact set stuff as you intended

postconf -n

postfix check

ufw enable postfix

ufw status verbose

# port 25 should be open

ss -lnpt | grep master

# postfix should be listening on port 25

su -l «nonroot-user»

mail «you@some-email»

At this point mail should just work. Check your email at you@some-email and reply to it. Then check if you have received the reply.

cat /var/log/mail.log

su -l «nonroot-user»

mail

If mail is not working, check the logs

Now email should be working with the command line utility.

Send email to local users and to your external email address using the command line utility mail. Check that you can read it.

Send in mail from and outside. Reply to that outside system, and check that your reply gets through.

To read mail from the command line mail, but reading and writing mail

using the command line utility is too painful, except for test purposes.

If you memorize lots of mail commands, it is usable, sort of.

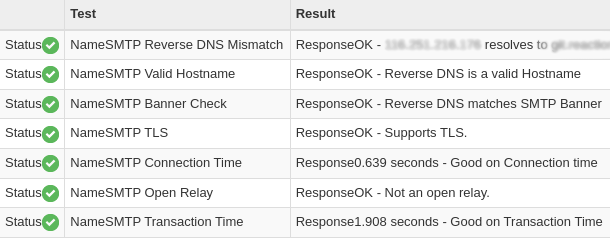

Test your mail server using MX tools

{width=100%}

{width=100%}

Now you have a basic Postfix email server up and running. You can send plain text emails and read incoming emails using the command line.

After sending and receiving a few emails, check for issues:

cat /var/log/mail.log | grep -E --color "warning|error|fatal|panic"

postqueue -p

If you see a pile of warnings warning symlink leaves directory: /etc/postfix/./makedefs.out that is just noise. Turn it off by replacing the symbolic link with a hard link

postfix check

rm /etc/postfix/makedefs.out; ln /usr/share/postfix/makedefs.out /etc/postfix/makedefs.out

postfix check

Or just ignore it.

Make sure that MX tools thinks your mail server is working.

TLS

Now you can send mail while logged in to your server, using the

command line program mail, programs running on your server can send

you or anyone else mail, and anyone can send mail to users that exist on

your server, which can be forwarded to actually useful email addresses.

But your email is in the clear, and can be read, or altered, by any unpleasant person between you and the destination that intends you harm or harm to those that you are messaging. Of which there are likely quite a lot. Such alterations are likely to result in your email server ending up blacklisted as a result of other people's anti malware precautions.

cat /var/log/mail.log | grep -E --color "warning|error|fatal|panic|TLS"

postqueue -p

You probably will not see any TLS activity. You want to configure Postfix to always attempt SSL, but not require it.

# TLS parameters

#

# SMTP from other servers to yours

# Make sure to substitute your certificates in for the smtp

# and smtpd certificates.

postconf -e smtpd_tls_cert_file=/etc/letsencrypt/live/rhocoin.org/fullchain.pem

postconf -e smtpd_tls_key_file=/etc/letsencrypt/live/rhocoin.org/privkey.pem

postconf -e smtpd_tls_security_level = may

postconf -e smtpd_tls_auth_only = yes

postconf -e smtpd_tls_mandatory_protocols=!SSLv2,!SSLv3,!TLSv1, !TLSv1.1

postconf -e smtpd_tls_protocols=!SSLv2,!SSLv3, !TLSv1, !TLSv1.1

postconf -e smtpd_tls_loglevel = 1

postconf -e smtpd_use_tls=yes

postconf smtpd_tls_session_cache_database

# should be:

# smtpd_tls_session_cache_database = btree:${data_directory}/smtpd_scache

#

# SMTP from your server to others

postconf -e smtp_tls_cert_file=/etc/letsencrypt/live/rhocoin.org/fullchain.pem

postconf -e smtp_tls_key_file=/etc/letsencrypt/live/rhocoin.org/privkey.pem

postconf -e smtp_tls_security_level=may

postconf -e smtp_tls_note_starttls_offer=yes

postconf -e smtp_tls_mandatory_protocols='!SSLv2, !SSLv3, !TLSv1, !TLSv1.1'

postconf -e smtp_tls_protocols='!SSLv2, !SSLv3, !TLSv1, !TLSv1.1'

postconf -e smtp_tls_logleve=1

postconf smtp_tls_session_cache_database

# should be:

# smtp_tls_session_cache_database = btree:${data_directory}/smtp_scache

# end TLS parameters

systemctl restart postfix

The excluded ciphers are weak or suspect, and SSLv2, SSLv3 have known holes.

I have not bothered checking the list of permitted ciphers, many of which would doubtless horrify me, because email security is incurably broken, and trying to fix it is an endless and insoluble problem. SSH is more readily fixable.

Now send an email from one of your actually useful email accounts on your

client computer, and then, using the near unusable command line utility

mail, send a response.

cat /var/log/mail.log |grep TLS

You should now see some TLS activity for those emails, and you should receive the emails.

OK, now we are all done, unless you want people to send you emails at cherry@rhocoin.org, and to be actually able to usefully read those emails without setting up forwarding to another address.

Well, not quite done, for now that you can receive emails, need to add your email to to your DMARC policy.

v=DMARC1; p=quarantine; rua=mailto:postmaster@rhocoin.org

A dmarc record is a text record with the hostname _dmarc, and the policy is its text value.

SASL

At this point any random person on the internet can send mail to

root@rhocoin.org, and you can automatically forward it to an actually

usable email address, but you cannot access his email account at

root@rhocoin.org from a laptop using thunderbird, and accessing it

through the command line using mail is not very useful.

Because although Postfix by default accepts sasl authenticated mail submissions to be relayed anywhere

smtpd_relay_restrictions = permit_mynetworks permit_sasl_authenticated defer_unauth_destination

It has yet as yet nothing configured to provide sasl authentication.

We don't want random spammer on the internet to send email as

random@rhocoin.org, but we do want authenticated users to be able to do

as they please.

So, need to install and configure Dovecot to provide sasl, to authenticate cherry to Postfix. And need to tell Postfix to accept Dovecot authentication.

However, before we do any of that, there is a very big problem, that all email systems that allow clients to send email are a bleeding security hole, because they do not use ssh. They instead use human memorable passwords. And, since we are using Dovecot in its simplest configuration they use your user login passwords. Which you did not care about until now, because everyone logs in by ssh and you have set it up to be impossible to login except by ssh. So you probably used short passwords easy to guess and easy to type.

But, once you install dovecot, every phisher, spammer and scammer on the internet will be trying to login as one of your users using lists of common passwords, and if he succeeds, is going to use your resources to send his spam or scam to everyone in the world.

Big email providers have big complex systems, which require a great deal

of work to set up and manage, to monitor this. You do not. So everyone

who is going to be able to send email, which is to say all users on your

system, has to have an unguessable password, ten or more characters of

unguessable gibberish, or six or more human readable words.

apt -y install libpam-pwquality

In addition to spammers, you will also, far less frequently, but far more dangerously, have spearphishers armed with inside information. So every user on your system has to have a strong password.

Most of the proposals for integrating Postfix and Dovecot seem to be endlessly complicated, endlessly different, pull in no end of additional programs, and endlessly incompatible with Postfix working just by itself. The basic problem is that Postfix was written assuming one actual user for each email address, and lots of software throws away this useless vestigial appendix, which breaks the now obsolete architecture of Postfix, and each such re-invention has complicated workarounds for this architectural break, each of which works in its own unique ad hoc way.

But, since we got the old fashioned architecture of Postfix working, and our primary interest is using it in programs that assume the old fashioned architecture, we have to stick to the path that email users are Linux users, to avoid getting into deep waters. We are going to map arbitrary email addresses to arbitrary users, but for someone to send and receive email, he has to have a user account on the server.

PAM is just old fashioned Unix logon passwords. Pop3s is just TLS secured pop3.

Dovecot

Install and set up Dovecot, now that postfix is working{target="_blank"}

Satellite postfix

Suppose you have another domain name, which has no host. Then you can make it a virtual alias domain on your actual host, or better, a virtual mailbox domain. Virtual mailboxes rely on dovecot to actually deliver the mail to the person who reads it on his desktop computer. Postfix abandons the delivery problem.

But suppose you have another domain, and another actual host, and you don't want to go through all the grief involved in setting up email that works. So you have its MX record point to your email host, install postfix on it as a satellite system.

Then nothing happens. Oops

Since programs running on it are sending out emails in its domain name,

your primary host has to enable it to relay. The simplest way is to add

its IP address to your primary host's mynetworks. Now programs running

on the other domain, such as wordpress, can send emails by relaying them

through the primary host, without them being suppressed in transit as spam.

There are rather a lot of options and alternatives, and if you go down the wrong path, you will get stuck.

If you are the only one with a virtual mailbox, might as well administer it by hand using only postfix, but if you have to go to virtual mailboxes, there are probably several people involved, in which case administering it just far too painful, both for you and those administered, better set up PostfixAdmin, and let those people administer themselves.

{target="_blank"}

To be allowed to relay through the primary host, the other systems have to

be listed in mynetworks, listed as virtual hosts, or something. There are

rather too many ways to do it, but mynetworks just makes the issue go away.

Virtual domains and virtual users

Now that you have set this up, you don't want to set it up for several domain name addresses corresponding to several hosts on the internet. You want to just put an MX record pointing to this host in that host's DNS, so that people can send and receive email using that host's domain name, regardless of whether a physical server with a network address exists for that domain name.

For each domain name that has an MX record pointing at this host add the

domain to the virtual_alias_domains in /etc/postfix/main.cf

postconf virtual_alias_domains

postconf -e virtual_alias_domains=reaction.la,blog.reaction.la

postconf -e virtual_alias_maps=hash:/etc/postfix/virtual

Now create the file /etc/postfix/virtual which will list all the email addresses of users with email addresses ending in those domain names.

ann@reaction.la ann

bob@reaction.la bob

carol@blog.reaction.la carol

dan@blog.reaction.la dan

@reaction.la blackhole

@blog.reaction.la blackhole

# ann, bob, carol, dan, and blackhole have to be actual users

# on the actual host, or entries in its aliases file, even if there

# is no way for them to actually login except through an

# email client, and if mail to blackhole goes unread and is

# eventually automatically deleted.

#

# The addresses without username catch all emails that do not

# have an entry.

# You don't want an error message response for invalid email

# addresses, as this may reveal too much to your enemies.

Every time your /etc/postfix/virtual is changed, you have to recompile it

into a hash database that postfix can actually use, with the command:

postmap /etc/postfix/virtual && postfix reload

set up an email client for a virtual domain

We have setup postfix and dovecot so that clients can only use ssl/tls, and not starttls.

On thunderbird, we go to account settings / account actions / add mail account

We then enter the email address and password, and click on configure manually

Select SSL/TLS and normal password

For the server, thunderbird will incorrectly propose .blog.reaction.la

Put in the correct value, rhocoin.org, then click on re-test. Thunderbird will then correctly set the port numbers itself, which are the standard port numbers.